CINXE.COM

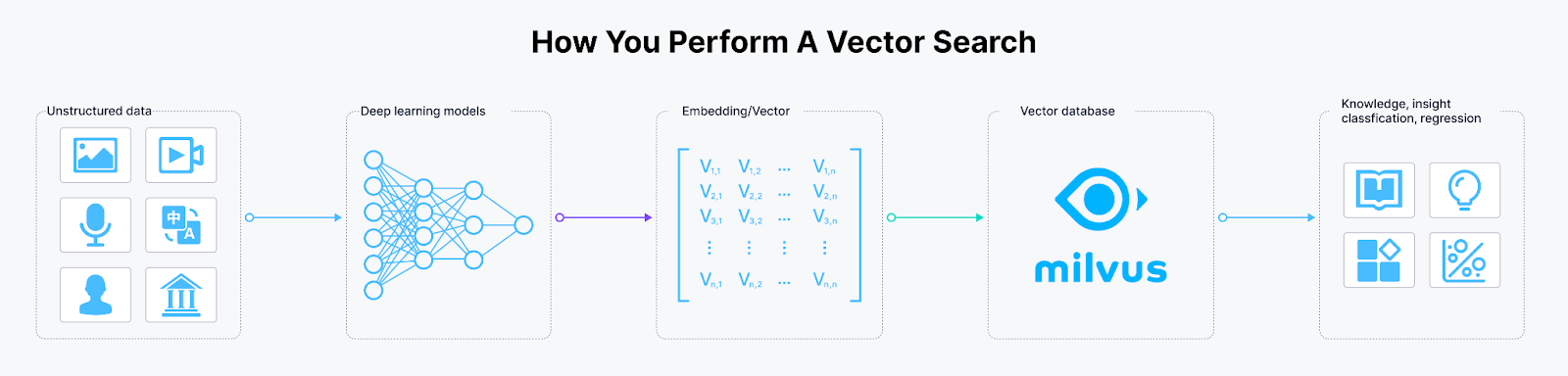

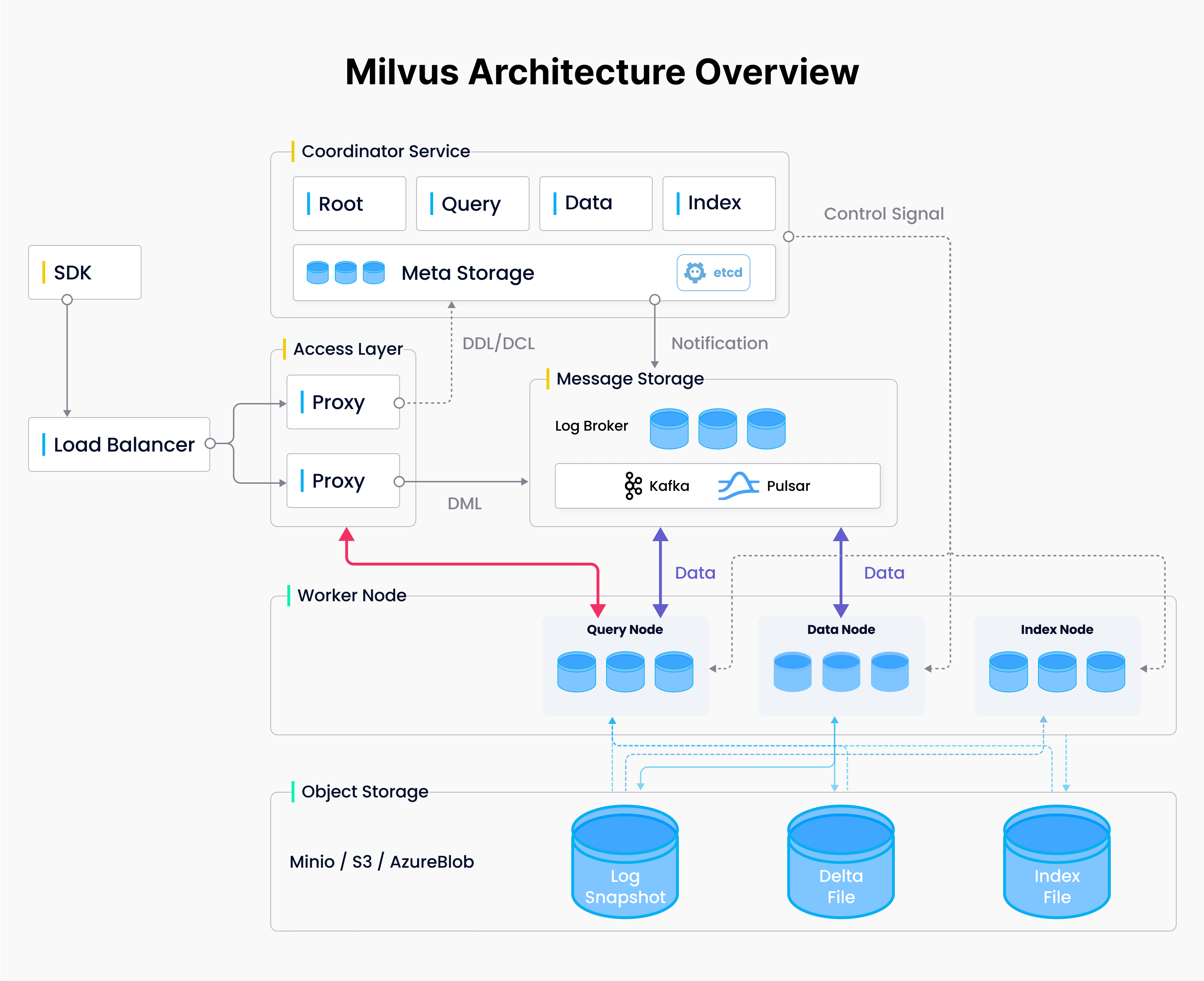

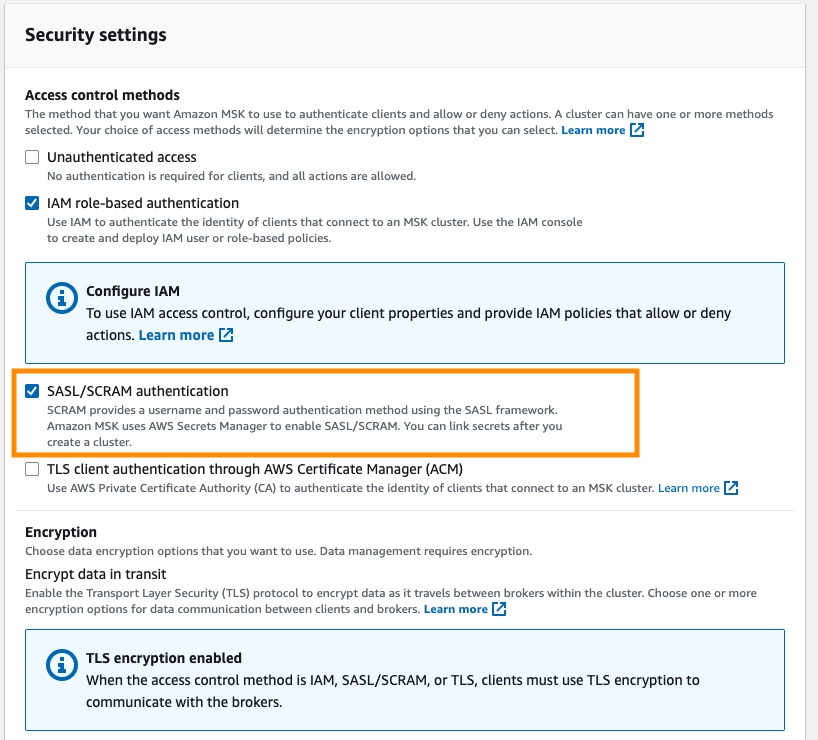

Learn Milvus: Insights and Innovations in VectorDB Technology